Summary

Nowadays, it is becoming increasingly difficult to efficiently manage available computational and storage resources, to provide transparent application access to such resources, and to ensure performance isolation and fairness across different workloads. The BigHPC project will address these challenges with a novel management framework, for Big Data and parallel computing workloads.

In this sense, the BigHPC will simplify the management of Big Data applications and HPC infrastructural resources – with a direct impact on science, industry and society, by accelerating scientific breakthroughs in different fields and increasing the competitiveness of companies through better data analysis and improved decision-support processes.

The project will advance the current knowledge and develop new tools to address the different challenges in HPC infrastructures, namely the monitoring, virtualization and storage management components. At the end of the project, it is expected that the BigHPC will integrate these three components in a new platform, thus allowing a more efficient use of said infrastructures and their services.

Expected Outcomes

- A novel solution to manage and monitor HPC and Big Data workloads that:

1) combines novel monitoring, virtualization and software-defined storage components;

2) can cope with HPC’s infrastructural scale and heterogeneity;

3) efficiently supports different workload requirements while ensuring the holistic performance and resource usage;

4) can be seamlessly integrated with existing HPC infrastructures and software stacks;

5) will be validated with pilots running in both MACC and TACC infrastructures.

| Start Date – End Date: | March 31, 2020 – March 31, 2023 June 30,2023 |

| Scientific Area: | Advanced Computing |

| Keywords: |

Big Data, High Performance Computing, HPAI |

| Website: | |

| Lead Beneficiary (PT): | Wavecom – Soluções Rádio S.A. |

|

Co-beneficiaries:

|

INESC TEC – Instituto de Engenharia de Sistemas e Computadores, Tecnologia e Ciência LIP, Laboratório de Instrumentação e Física Experimental de Particulas – Associação para a Investigação e Desenvolvimento |

| PIs at UT Austin: | Vijay Chidambaram (Department of Computer Science) John Cazes (Texas Advanced Computing Center) |

| Other Partners: | Minho Advanced Computing Center |

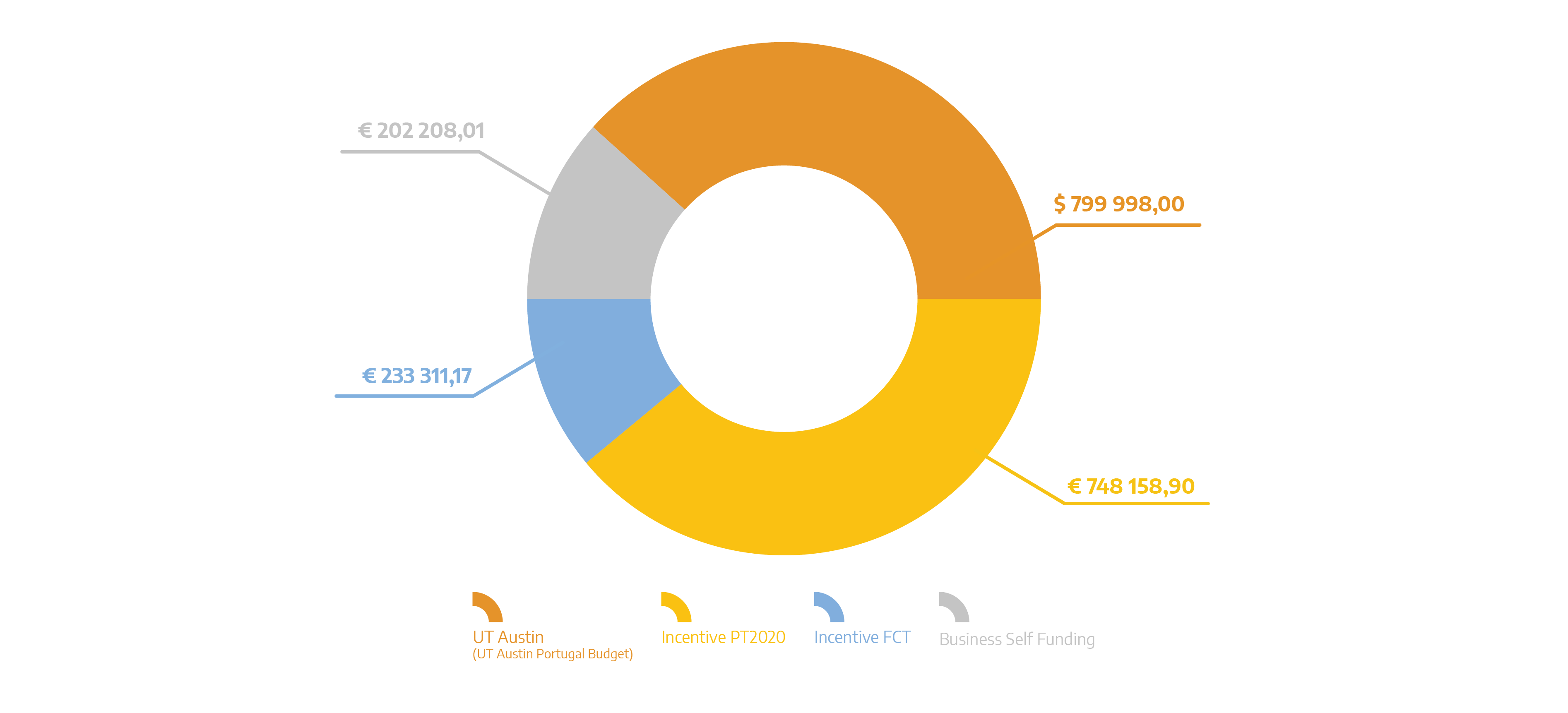

| Total Eligible Investment (PT): | 1 183 678,08 EUR |

| Total Eligible Investment (US): | 799 998,00 USD |

| Funding Sources Distribution: |

Papers and Communications

- Miranda, M. (2023). Distributed and Dependable Software-Defined Storage Control Plane for HPC. In 2023 IEEE/ACM 23rd International Symposium on Cluster, Cloud and Internet Computing Workshops (CCGridW). 2023 IEEE/ACM 23rd International Symposium on Cluster, Cloud and Internet Computing Workshops (CCGridW). IEEE. https://doi.org/10.1109/ccgridw59191.2023.00071

- LeBlanc, H., Pailoor, S., K R E, O. S., Dillig, I., Bornholt, J., & Chidambaram, V. (2023). Chipmunk: Investigating Crash-Consistency in Persistent-Memory File Systems. In Proceedings of the Eighteenth European Conference on Computer Systems. EuroSys ’23: Eighteenth European Conference on Computer Systems. ACM. https://doi.org/10.1145/3552326.3567498

- Fernandes, P. H., & Baquero, C. (2023). Probabilistic Causal Contexts for Scalable CRDTs. In Proceedings of the 10th Workshop on Principles and Practice of Consistency for Distributed Data. PaPoC ’23: 10th Workshop on Principles and Practice of Consistency for Distributed Data. ACM. https://doi.org/10.1145/3578358.3591331

- Shah, Vijay Chidambaram, Meghan Cowan, Saeed Maleki, Madan Musuvathi, Todd Mytkowicz, Jacob Nelson, Olli Saarikivi, Rachee Singh. TACCL: Guiding Collective Algorithm Synthesis using Communication Sketches. Aashaka. To appear in the proceedings of 20th

- Macedo, R., Miranda, M., Tanimura, Y., Haga, J., Ruhela, A., Harrell, S. L., Evans, R. T., & Paulo, J. (2022). Protecting Metadata Servers From Harm Through Application-level I/O Control. In 2022 IEEE International Conference on Cluster Computing (CLUSTER). 2022 IEEE International Conference on Cluster Computing (CLUSTER). IEEE. https://doi.org/10.1109/cluster51413.2022.00075

- da Gião, H. (2022). A model-driven approach for DevOps. In 2022 IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC). 2022 IEEE Symposium on Visual Languages and Human-Centric Computing (VL/HCC). IEEE. https://doi.org/10.1109/vl/hcc53370.2022.9833125

- Macedo, R., Tanimura, Y., Haga, J., Chidambaram, V., Pereira, J., & Paulo, J. (2022, February). PAIO: General, Portable I/O Optimizations With Minor Application Modifications. In Proceedings of the USENIX Conference on File and Storage Technologies (FAST) (pp. 1-13). USENIX.

- Esteves, T. Neves, F., Oliveira, R. & Paulo, J. (2021). CaT: Content-aware Tracing and Analysis for Distributed Systems. Accepted at the ACM/IFIP Middleware conference. Middleware’21. https://doi.org/10.1145/3464298.3493396

- Faria, A., Macedo, R., & Paulo, J. (2021). Pods-as-Volumes. In Proceedings of the Seventh International Workshop on Container Technologies and Container Clouds. Middleware ’21: 22nd International Middleware Conference. ACM. https://doi.org/10.1145/3493649.3493653

- Miranda, M., Esteves, T., Portela, B., & Paulo, J. (2021). S2Dedup. In Proceedings of the 14th ACM International Conference on Systems and Storage. SYSTOR ’21: The 14th ACM International Systems and Storage Conference. ACM. https://doi.org/10.1145/3456727.3463773

- Faria, A., Macedo, R., Pereira, J., & Paulo, J. (2021). BDUS. In Proceedings of the 14th ACM International Conference on Systems and Storage. SYSTOR ’21: The 14th ACM International Systems and Storage Conference. ACM. https://doi.org/10.1145/3456727.3463768

- Ruhela, A., Harrell, S. L., Evans, R. T., Zynda, G. J., Fonner, J., Vaughn, M., Minyard, T., & Cazes, J. (2021). Characterizing Containerized HPC Applications Performance at Petascale on CPU and GPU Architectures. In Lecture Notes in Computer Science (pp. 411–430). Springer International Publishing. https://doi.org/10.1007/978-3-030-78713-4_22

- Kadekodi, R., Kadekodi, S., Ponnapalli, S., Shirwadkar, H., Ganger, G. R., Kolli, A., & Chidambaram, V. (2021). WineFS. In Proceedings of the ACM SIGOPS 28th Symposium on Operating Systems Principles CD-ROM. SOSP ’21: ACM SIGOPS 28th Symposium on Operating Systems Principles. ACM. https://doi.org/10.1145/3477132.3483567

- Ruhela, A., Vaughn, M., Harrell, S. L., Zynda, G. J., Fonner, J., Evans, R. T., & Minyard, T. (2020). Containerization on Petascale HPC Clusters. State of Practice talk in International Conference for High Performance Computing, Networking, Storage and Analysis (SC’20). https://doi.org/10.26153/TSW/12168

- Evans, R. T. (2020). Democratizing Parallel Filesystem Monitoring. In 2020 IEEE International Conference on Cluster Computing (CLUSTER). 2020 IEEE International Conference on Cluster Computing (CLUSTER). IEEE. https://doi.org/10.1109/cluster49012.2020.00065